Social media giant TikTok has announced a spate of new mental well-being features and resources to mark World Suicide Prevention Month in September. In addition to fine-tuning its already existing search interventions related to self-harm and suicide, the company has launched a new guide in partnership with mental health experts and a dedicated guide on eating disorders.

"We care deeply about our community, and we always look for new ways in which we can nurture their well-being. That's why we're taking additional steps to make it easier for people to find resources when they need them on TikTok," the company said in a blog post on Tuesday.

TikTok said it already has strict community guidelines in place that ban the promotion, glorification or normalisation of suicide, self-harm or eating disorders, but that it's now also taking additional steps "to make it easier for people to feel safe and find support".

New well-being guides have been developed in partnership with International Association for Suicide Prevention, Crisis Text Line, Live For Tomorrow, Samaritans of Singapore and Samaritans (UK). The guides, which are available on the company's Safety Centre, also offer tips to help users engage with someone who may be struggling or in distress.

Search interventions have also been updated.

"When someone searches for words or phrases such as #suicide, we'll direct them to local support resources such as the Crises Text Line helpline, where they can find support and information about treatment options," TikTok said.

Content from other creators sharing their personal experiences with mental well-being, information on where to seek support and advice on how to talk to loved ones about these issues will also appear.

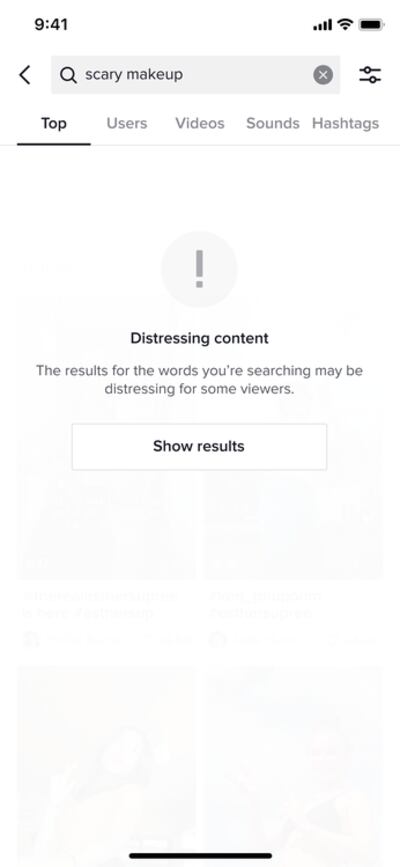

Stronger content warnings have also been enforced, starting from September. When a user searches for terms that could bring up content that some may find distressing, for example "scary make-up", the search results page will be covered with an opt-in viewing screen. Users will be able to click "show results" to continue to see the content.

A dedicated guide to eating disorders has also been added to the Safety Centre, with the help of the National Eating Disorders Association, National Eating Disorder Information Centre, Butterfly Foundation and Bodywhys. The resources provide support and advice on eating disorders to teenagers, caregivers and educators. There will also be regular public service announcements on certain hashtags such as #whatIeatinaday, to increase awareness and provide support to the community, the company said.

TikTok's latest move comes a day after an article in The Wall Street Journal titled Facebook Knows Instagram Is Toxic for Teen Girls claimed internal studies by Facebook over the past three years showed how Instagram affects its young user base.

“Thirty-two per cent of teen girls said that when they felt bad about their bodies, Instagram made them feel worse,” researchers reportedly wrote.

WSJ cited documents viewed by them and said more than 40 per cent of Instagram’s users are aged 22 and younger.

Karina Newton, head of public policy at Instagram, said in a blog post that the story focused on "a limited set of findings and casts them in a negative light".

"From our research, we’re starting to understand the types of content some people feel may contribute to negative social comparison, and we’re exploring ways to prompt them to look at different topics if they’re repeatedly looking at this type of content," she wrote. "We’re cautiously optimistic that these nudges will help point people towards content that inspires and uplifts them, and to a larger extent, will shift the part of Instagram’s culture that focuses on how people look."

Earlier this year, TikTok announced it had removed nearly 7.3 million accounts suspected to belong to under-age children.

Children aged 13 and over are allowed to use the platform, but accounts belonging to users between the ages of 13 and 15 are set to private by default.